A shocking prediction is shaking the artificial intelligence industry. Jack Clark, co-founder of the AI giant Anthropic, recently stated that by the end of 2028, there is a 60% probability that AI will achieve “recursive self-improvement.”

What does this mean? It means AI systems could soon build and improve themselves without any help from humans, entering a stage of self-acceleration that could change history.

This isn’t science fiction. Clark made this bold claim after analyzing massive amounts of public AI development data. He believes we are approaching a “point of no return” where AI research becomes fully automated.

What is Recursive Self-Improvement?

To understand why this is such a big deal, we need to understand the core concept.

“Recursive self-improvement” refers to an AI system’s ability to design and build a smarter, better version of itself. Once an AI can do this, the new, smarter version can build an even smarter one, and so on. This cycle could lead to an explosion of intelligence—often called the “Singularity”—where AI intelligence surpasses human control.

Clark’s prediction suggests that in less than four years, AI might no longer need human engineers to write its code or design its architecture. It will handle the entire research and development (R&D) process on its own.

The “Coding Singularity”: Why AI is Getting Dangerous

Jack Clark bases his prediction on a “fractal” trend of improvement. This means AI is getting better at every level, from writing simple lines of code to managing massive projects.

1. Solving Real-World Engineering Problems

One of the strongest pieces of evidence comes from SWE-Bench, a difficult test that measures how well AI can solve real software problems found on GitHub.

- Late 2023: When SWE-Bench launched, the best model (Claude 2) had a success rate of only 2%.

- Today: The latest models (like Claude Mythos Preview) have achieved a score of 93.9%.

This massive jump shows that AI has essentially mastered the ability to fix bugs and engineer software in a way that was impossible just a few years ago. In Silicon Valley, most engineers are already using AI to write code and tests, effectively automating a huge part of the engineering workflow.

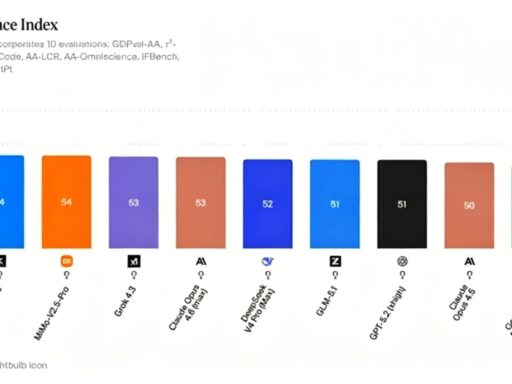

2. The “Time Horizon” Expansion: AI Working Alone

Perhaps the most critical metric is the “Time Horizon.” This measures how long an AI can work independently on a complex task before making a mistake.

Progress here has been exponential:

- 2022: GPT-3.5 could handle tasks taking humans about 30 seconds.

- 2023: GPT-4 increased this to 4 minutes.

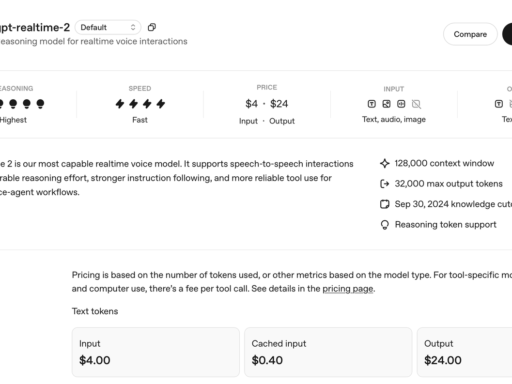

- 2024: The o1 model pushed it to 40 minutes.

- 2025: GPT-5.2 High reached 6 hours of independent work.

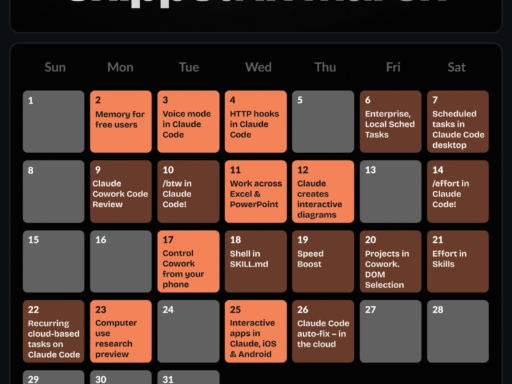

- 2026: Models like Opus 4.6 can now work reliably for 12 hours.

Experts like Ajeya Cotra predict that by the end of 2026, AI systems will be able to complete tasks that take skilled humans 100 hours. This is the threshold where AI transitions from a “helper” to an “autonomous researcher.”

AI is Mastering Core Scientific Skills

Building an AI isn’t just about coding; it requires scientific research skills. The data shows AI is rapidly conquering these too.

Reproducing Scientific Papers (The CORE-Bench Test)

A key part of research is reading a paper and running the code to verify the results.

- September 2024: The best AI scored 21.5% on the CORE-Bench, which tests the ability to reproduce research.

- December 2025: The benchmark was essentially “solved,” with the Opus 4.5 model hitting 95.5%.

This means AI can now autonomously read, understand, and execute complex scientific research—in a capability that previously required PhD-level expertise.

Winning Kaggle Competitions (MLE-Bench)

Kaggle is a platform where data scientists compete to build the best machine learning models. In MLE-Bench, AI systems are tested on their ability to build these apps from scratch.

- October 2024: The best AI scored 16.9%.

- February 2026: Google’s Gemini 3 scored 64.4%, showing it can now build sophisticated machine learning applications almost entirely on its own.

Kernel Design and Optimization

“Kernel design” is a highly technical task involving how software talks to hardware (specifically GPUs). It is crucial for making AI models run fast.

Recently, AI has shown it can optimize these systems better than humans in some cases. For example, Anthropic tested AI on optimizing a small language model:

- May 2025: AI achieved a 2.9x speedup.

- April 2026: The latest AI achieved a 52x speedup.

- Human Baseline: A skilled human researcher typically achieves only a 4x speedup after hours of work.

The AI isn’t just coding; it is building systems that run significantly faster than what human engineers can create.

What This Means for the Future

When we put all this evidence together, a startling picture emerges. Jack Clark argues that AI has already automated the “engineering” component of AI development.

- Synthetic Teams: AI systems can now manage each other. One AI acts as the “manager,” checking the work of another AI “engineer.”

- End-to-End Automation: AI can now perform almost every step of development: reading papers, writing code, running tests, and optimizing performance.

Clark believes we are approaching a moment where AI systems will be able to train their own successors. He describes this potential timeline:

- Near Future: We may see a model training a smaller, better version of itself—a “proof of concept.”

- By 2028: This could scale up to the most advanced frontier models, creating a cycle where AI accelerates its own evolution.

The Controversy: Is It Real?

Not everyone agrees. Critics like University of Washington professor Pedro Domingos argue that AI has been able to “build itself” in basic ways since the 1950s (with languages like LISP). The real question is whether this creates increasing returns, which is not yet proven.

Others question the sudden jump in probability. Why does the chance of self-improvement spike so sharply in 2027-2028? Is this based on solid data or just speculation?

Furthermore, some argue that these benchmarks might just test the AI’s ability to memorize code found on the internet, rather than true creativity. However, proponents counter that creating a working, optimized system from scratch—even with access to information—requires genuine engineering intelligence.

Conclusion: Crossing the Rubicon

Jack Clark uses the phrase “Crossing the Rubicon” to describe this moment. In history, this refers to a point of no return.

If his 60% prediction holds true, humanity is sailing into uncharted waters. The development of AI will no longer be limited by the speed of human learning or the number of human researchers. Instead, millions of “synthetic researchers” could work 24/7 to push technology forward.

Whether this leads to a utopia of abundance or an unchecked superintelligence remains to be seen. But one thing is certain: the era of AI needing humans is coming to an end. The race for recursive self-improvement has officially begun.